- [+] expand all

- Getting started

- Vespa Overview

- Features

- Getting Started

- Vespa CLI

- Getting Started with Ranking

- Tutorials

- Vespa API and Interfaces

- Frequently Asked Questions - FAQ

- Glossary

- Schemas and documents

- Documents

- Schemas

- Parent/Child

- Annotations API

- Concrete document types

- Reading and writing

- Reads and Writes

- /document/v1

- Visiting

- Vespa Feed Client

- Indexing

- Document API

- Partial Updates

- Querying

- Query API

- Vespa Query Language

- Grouping Information in Results

- Federation

- Query Profiles

- Nearest Neighbor Search

- Approximate Nearest Neighbor Search

- Nearest Neighbor Search Guide

- Text matching

- Geo Search

- Predicate Fields

- Streaming Search

- Document Summaries

- Result Renderers

- Page Templates

- Ranking and ML models

- Ranking Introduction

- Rank Features and Expressions

- Embedding

- Multivalue Query Operators

- Tensor User Guide

- Tensor Examples

- Phased Ranking

- Searcher Re-Ranking

- Cross-Encoder Transformer Ranking

- Ranking With TensorFlow Models

- Ranking With ONNX Models

- Ranking With XGBoost Models

- Ranking With LightGBM Models

- Stateless model evaluation

- Ranking With BM25

- Ranking With nativeRank

- Accelerated OR search using the WAND algorithm

- Linguistics and text processing

- Linguistics in Vespa

- Query Rewriting

- Embedding text

- Troubleshooting character encoding

- Lucene Linguistics

- Tutorials and quick starts

- News 1: Getting Started

- News 2: Application Packages, Feeding, Query

- News 3: Sorting, Grouping and Ranking

- News 4: Embeddings

- News 5: Partial Updates, ANNs, Filtering

- News 6: Custom Searchers, Document Processors

- News 7: Parent-Child, Tensor Ranking

- Models Hot Swap

- Text Search

- Text Search ML

- Semantic Text Search

- Quick Start

- Quick Start Java

- Applications and components

- Developer Guide

- Application Packages

- Unit Testing

- Testing

- Testing Reference

- Testing Reference Java

- Java Serving Container

- Container Components

- Request-Response Processing

- Searcher Development

- Document Processor Development

- Developing Web Service Applications

- Component Injection

- Chained Components

- Configuring Java components

- Bundles

- Using ZooKeeper

- Developing request handlers

- Building an HTTP API using request handlers and processors

- Configuring Http Servers and Filters

- Using Libraries for Pluggable Frameworks

- Developing server providers

- Server Tutorial

- LLMs in Vespa

- Content clusters

- Elasticity

- Proton

- Content Nodes and States

- Consistency Model

- Distribution Algorithm

- Buckets

- Performance and tuning

- Performance intro

- Practical performance guide

- Serving Sizing Guide

- Feed Sizing Guide

- Sizing Examples

- Document Attributes

- Benchmarking

- Profiling

- Container Tuning

- Rate-Limiting Search Requests

- HTTP/2

- Graceful Query Coverage Degradation

- Caches

- HTTP Performance Testing

- Feature Tuning

- Valgrind

- Operations

- Metrics

- Logs

- Access Logging

- Batch delete

- Feed block

- Reindexing

- Tools

- Operations - selfhosted

- Multinode Systems

- Administrative Procedures

- Files, Processes, Ports, Environment

- Node Setup

- Content node recovery

- Using Kubernetes with Vespa

- Securing a Vespa installation

- mTLS

- Configuration Servers

- Live Vespa upgrade procedure

- Config Sentinel

- Config Proxy

- Docker Containers

- Vespa Command-line Tools

- Docker Containers GPU setup

- Service Location Broker

- Change from attribute to index procedure

- Container

- Monitoring

- Routing

- Configuration reference

- Application Package Reference

- Schema Reference

- services.xml

- services.xml - admin

- services.xml - container

- services.xml - content

- services.xml - docproc

- services.xml - http

- services.xml - processing

- services.xml - search

- hosts.xml

- validation-overrides.xml

- Indexing Language Reference

- Custom Configuration File Reference

- mTLS Reference

- Internal Configuration File Reference

- Healthchecks Reference

- /state/v1 API Reference

- Deploy API

- HTTP Config API

- /application/v2/tenant API Reference

- /cluster/v2 API Reference

- /metrics/v1 API Reference

- /metrics/v2 API Reference

- /prometheus/v1 API Reference

- Ranking and ML models reference

- Ranking Expressions

- Tensor Evaluation Reference

- nativeRank Reference

- Rank Feature Reference

- String Segment Match

- Rank Feature Configuration

- Rank Types

- Stateless Model Reference

- Embedding Model Reference

- Queries and results reference

- Query API Reference

- Query Language Reference

- Simple Query Language Reference

- Select Reference

- Grouping Reference

- Sorting Reference

- Query Profile Reference

- Semantic Rule Language Reference

- Default JSON Result Format

- Page Templates Syntax

- Page Result Format

- Inspecting Structured Data in a Searcher

- Low-level request handler APIs

- Document API reference

- /document/v1 API reference

- Document JSON Format

- Document Field Path Syntax

- Document Selector Language

- Component reference

- Component Reference

- Metrics reference

- Vespa Metric Set Reference

- Default Metric Set Reference

- Container Metrics Reference

- Distributor Metrics Reference

- Searchnode Metrics Reference

- Storage Metrics Reference

- Configserver Metrics Reference

- Logd Metrics Reference

- Node Admin Metrics Reference

- Slobrok Metrics Reference

- Clustercontroller Metrics Reference

- Sentinel Metrics Reference

- Metric Units Reference

- Utilities and libraries

- Predicate Search Java Library

- pyvespa: Getting Started

Ranking, in general, becomes more accurate by using complex expressions which use many features.

Read the query API guide to get an overview of how queries are executed in Vespa, before continuing this guide.

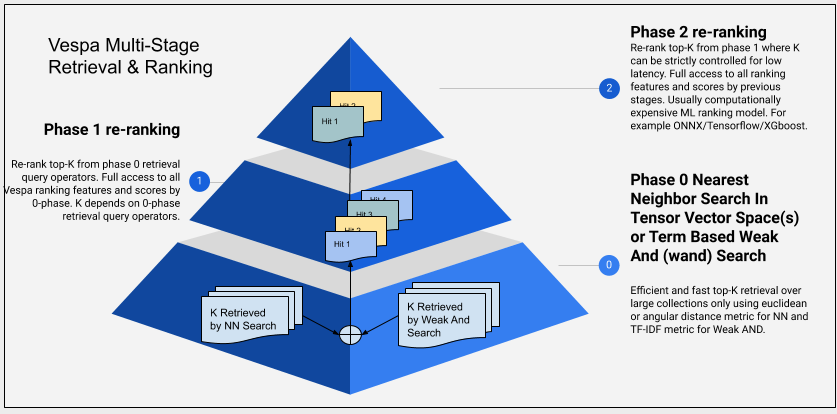

Vespa supports multiple rank phases:

- Some specialized query operators can do limited ranking to speed up retrieval of top matches by discarding low-quality matches early in the matching phase.

- Ranking at each content node in a first-phase expression; configured by rank profiles with ranking expressions working on scalars, tensors or matching features, possibly also excluding low-quality matches.

- Re-ranking of the best hits at each content node using a second-phase ranking (configured in rank profiles together with the first-phase expression).

- Re-ranking of the top hits from the content nodes in the stateless container using a configured expression (usually with an AI-trained ONNX model), see global-phase below.

- For totally customized ranking it's possible to implement re-ranking of the top hits from the content nodes using a custom stateless searchers. See concrete example in reranking in searcher.

Simple ranking on content nodes

Normal ranking will always start by having one ranking expression

that is evaluated on the content nodes. This is configured in

the rank-profile section of a

schema

as a first-phase expression. This expression can

use various rank features

with information extracted during the matching phase to evaluate

the quality of each hit.

Because the first-phase is computed for every match, the cost is bounded

only by the number of documents on each content node multiplied with

the complexity of the first-phase expression; therefore the expression

needs be simple and cheap to allow scaling to large amounts of documents.

Two-phase ranking on content nodes

While some use cases only require one (simple) first-phase

ranking expression, for more advanced use cases it's possible to

add another second-phase ranking expression in a

rank-profile

in the schema.

This enables more expensive computations than would be feasible

to run as a first-phase computation, with predictable

upper bounds for the cost. This phase runs only on the most

promising hits as selected by the first-phase ranking, so it's

important to have an appropriate first-phase, with good correlation

between first-phase and second-phase expressions.

By default, second-phase ranking (if specified) is run on the 100 best hits from the first-phase ranking per content node, after matching and before information is returned to the container. The number of hits to rerank can be configured as well in the rank-profile. Example:

schema myapp {

…

rank-profile title-freshness inherits default {

first-phase {

expression {

nativeRank(title) + freshness(timestamp)

}

}

second-phase {

expression {

xgboost("my-model.json")

}

rerank-count: 50

}

}

}

In this example, the first phase uses the text matching feature nativeRank scoped to the title field plus one of the built-in document ranking features named freshness over a timestamp field which stores the epoch time in second resolution. For each content node, the top 50 hits from the first phase function is re-ranked using a trained xgboost model.

Using a global-phase expression

Using a rank expressions configured as a

global-phase

in the rank-profile section of a schema, you can add

a ranking phase that will run in the stateless container after

gathering the best hits from all content nodes; this can be used

instead of or in addition to

second-phase ranking.

The global-phase can also perform

cross-hit normalization

to combine unrelated scoring methods.

By default, global-phase ranking runs on the 100 globally best hits for

a schema; this can be tuned in the rank-profile using

rerank-count

or per-query using the

ranking.globalPhase.rerankCount

query property.

This phase is optimized for running large ONNX models, taking some input data from the document and some from the query, and finding a score for how well they match. It's possibly to specify gpu-device to get GPU-accelerated computation of the model as well. You can compute or re-shape the inputs to the ONNX model in a function if necessary, and use the output in some further calculation to compute the final score.

Note that both input and output from ONNX models must always be some sort of tensor (in the simplest case with 1 dimension of fixed size 1) so at minimum the output tensor must be reduced to a simple number to get a ranking score.

If you have large and complex expressions instead of an ONNX model, it's often better to use the highly optimized second-phase computation on content nodes. You can also force a sub-expression to be computed on the content nodes by making it a function and adding it as a match-feature in the ranking profile.

schema myapp {

document myapp {

field per_doc_vector type tensor<float>(x[784]) {

indexing: attribute

}

…

}

…

rank-profile with-global-model inherits default {

first-phase {

expression: nativeRank(title)

}

inputs {

query(per_query_vector) tensor<float>(d0[32])

}

function per_doc_input() {

# simple reshaping: ONNX input wants the dimension name "d0"

expression: rename(attribute(per_doc_vector), x, d0)

}

onnx-model my_ranking_model {

file: files/my_ranking_model.onnx

input "model_input_1": per_doc_input

input "model_input_2": query(per_query_vector)

output "model_output_1": out

}

global-phase {

expression {

sum(onnx(my_ranking_model).out)

}

rerank-count: 50

}

}

}

Cross-hit normalization including reciprocal rank fusion

The ranking expressions configured for global-phase may perform cross-hit normalization of input rank features or functions. This is designed to make it easy to combine unrelated scoring methods into one final relevance score. The syntax looks like a special pseudo-function call:

-

normalize_linear(my_function_or_feature) -

reciprocal_rank(my_function_or_feature) -

reciprocal_rank(my_function_or_feature, k) -

reciprocal_rank_fusion(score_1, score_2 ...)

rank-profile with-normalizers inherits with-global-model {

function my_bm25_sum() {

expression: bm25(title) + bm25(abstract)

}

function my_model_out() {

expression: sum(onnx(my_ranking_model).out)

}

global-phase {

expression {

normalize_linear(my_bm25_sum) + normalize_linear(my_model_out) + normalize_linear(attribute(popularity))

}

rerank-count: 200

}

}

The normalize_linear normalizer takes a single argument which must be

a rank-feature or the name of a function. It computes the maximum and minimum

values of that input and scales linearly to the range [0, 1], basically using

the formula output = (input - min) / (max - min)

The reciprocal_rank normalizer takes one or two arguments; the first

must be a rank-feature or the name of a function, while the second (if present)

must be a numerical constant, called k with default value 60.0.

It sorts the input values and finds their rank (so highest score gets

rank 1, next highest 2, and so on). The output from reciprocal_rank is computed

with the formula output = 1.0 / (k + rank) so note that even the best

input only gets 1.0 / 61 = 0.016393 as output with the default k.

The reciprocal_rank_fusion pseudo-function takes at least two arguments

and expands to the sum of their reciprocal_rank; it's just a

convenient way to write

reciprocal_rank(a) + reciprocal_rank(b) + reciprocal_rank(c)as

reciprocal_rank_fusion(a,b,c)for example.

Stateless re-ranking

If the logic required is not suited for the global-phase above, it's possible to write stateless searchers which can re-rank hits using any custom scoring function or model. The searcher can also blend and re-order hits from multiple sources when using federation of content sources.

The searcher might request rank features calculated by the content nodes to be returned along with the hit fields using summary-features. The features returned can be configured in the rank-profile as summary-features.

The number of hits is limited by the query api hits parameter and maxHits setting. The hits available for container level re-ranking are the global top ranking hits after content nodes have retrieved and ranked the hits and global top ranking hits have been found by merging the responses from the content nodes.

Top-K Query Operators

If the first-phase ranking function can be approximated as a simple linear function, and the query mode is any, the Weak And/WAND implementations in Vespa allows avoiding fully evaluating all the documents matching the query with the first-phase function. Instead, only the top K hits using the internal operator ranking will be exposed to the first-phase ranking function.

Both wand implementations accepts a targeted number of hits (top N). Each content node will expose all hits that were evaluated to the first-phase ranking function, while skipped documents will not. The skipped set are the ones that matches the query, but which cannot outperform any of the already collected hits on the internal scoring heap. Due to not fully evaluating all documents matching the query, the total hit count becomes inaccurate when using weakAnd/Wand.

The nearest neighbor search operator is also a top k retrieval operator which does ranking (by distance) and retrieval in one process and exposes only a small subset of the matching documents to the first-phase ranking function.

Choosing phased ranking functions

A good ranking expression will for most applications consume too much CPU to be runnable on the entire result set within the latency budget/SLA. The application ranking function should hence in most cases be a second phase function. The task then becomes to find a first phase function, which correlates sufficiently well with the second phase function - this to ensure that relevance is not hurt too much by not evaluating the real ranking function on all the hits.

Rank phase statistics

Use match-features and summary-features to export detailed match- and rank-information per query. This requires post-processing and aggregation is an external system for analysis.

To evaluate how well the document corpus matches the queries, use mutable attributes to track how often each document survives each match- and ranking-phase. This is aggregated per document and makes it easy to analyse using the query and grouping APIs in Vespa - and no other processing/storage is required.

A mutable attribute is a number where an operation can be executed in 4 phases:

- on-match

- on-first-phase

- on-second-phase

- on-summary

The common use case is to increase the value by 1 for each execution. With this, it is easy to evaluate the document's performance to the queries, e.g. find the documents that appear in most queries, or the ones that never matched - run a query and order by the mutable attribute.

This example is based on the quickstart.

It uses 4 attributes that each track how many times a document participates in any of the 4 phases.

This is tracked only if using rank-profile rank_albums_track in the query:

schema music {

document music {

field artist type string {

indexing: summary | index

}

field album type string {

indexing: summary | index

}

field year type int {

indexing: summary | attribute

}

field category_scores type tensor<float>(cat{}) {

indexing: summary | attribute

}

}

field match_count type long {

indexing: attribute | summary

attribute: mutable

}

field first_phase_count type long {

indexing: attribute | summary

attribute: mutable

}

field second_phase_count type long {

indexing: attribute | summary

attribute: mutable

}

field summary_count type long {

indexing: attribute | summary

attribute: mutable

}

fieldset default {

fields: artist, album

}

rank-profile rank_albums inherits default {

first-phase {

expression: sum(query(user_profile) * attribute(category_scores))

}

second-phase {

expression: attribute(year)

rerank-count: 1

}

}

rank-profile rank_albums_track inherits rank_albums {

mutate {

on-match { match_count += 1 }

on-first-phase { first_phase_count += 1 }

on-second-phase { second_phase_count += 1 }

on-summary { summary_count += 1 }

}

}

rank-profile rank_albums_reset_on_match inherits rank_albums {

mutate {

on-match { match_count = 0 }

}

}

rank-profile rank_albums_reset_on_first_phase inherits rank_albums {

mutate {

on-match { first_phase_count = 0 }

}

}

rank-profile rank_albums_reset_on_second_phase inherits rank_albums {

mutate {

on-match { second_phase_count = 0 }

}

}

rank-profile rank_albums_reset_on_summary inherits rank_albums {

mutate {

on-match { summary_count = 0 }

}

}

}

$ vespa query \ "select * from music where album contains 'head'" \ "ranking=rank_albums_track"

Usage

The framework is flexible in use, the normal use case is:

-

Reset the mutable attribute on all content nodes -

use searchPath

to make sure all nodes are reset by sending a query using a rank profile that resets the value.

For each phase, run a query that matches all documents, and reset the attribute - e.g.:

$ for phase in match first_phase second_phase summary; do \ for node in {0..3}; do vespa query \ "select * from music where true" \ "ranking=rank_albums_reset_on_$phase" \ "model.searchPath=$node/0"; \ done \ doneAlternatively, run a query against a group and verify that coverage is 100%. -

Run query load, using the tracking rank-profile, like

rank_albums_trackabove - Run queries using sorting or grouping on the mutable attributes.

To initialize a mutable attribute with a different value than 0 when a document is PUT, use:

field match_count type long {

indexing: 7 | to_long | attribute | summary # Initialized to 7 for a new document. Default is 0.

attribute: mutable

}

To dump values fast, from memory only (assuming the schema has an id field):

document-summary rank_phase_statistics {

summary id {}

summary match_count {}

summary first_phase_count {}

summary second_phase_count {}

summary summary_count {}

}

$ vespa query \ "select * from music where true" \ "presentation.summary=rank_phase_statistics"